Escaping the Tutorial Loop: Building a CLI ETL Tool in Rust (From Scratch to Generic)

How I finally learned Rust by building the one tool every Data Engineer understands: An ETL Pipeline.

I’ve done the crash courses. I’ve read the book. But like many developers learning Rust, I kept getting stuck in the "tutorial loop". I understood the syntax, but I didn't know how to start a project.

As a Data Engineer, I decided to stop following generic tutorials and build something I actually understand: an ETL pipeline.

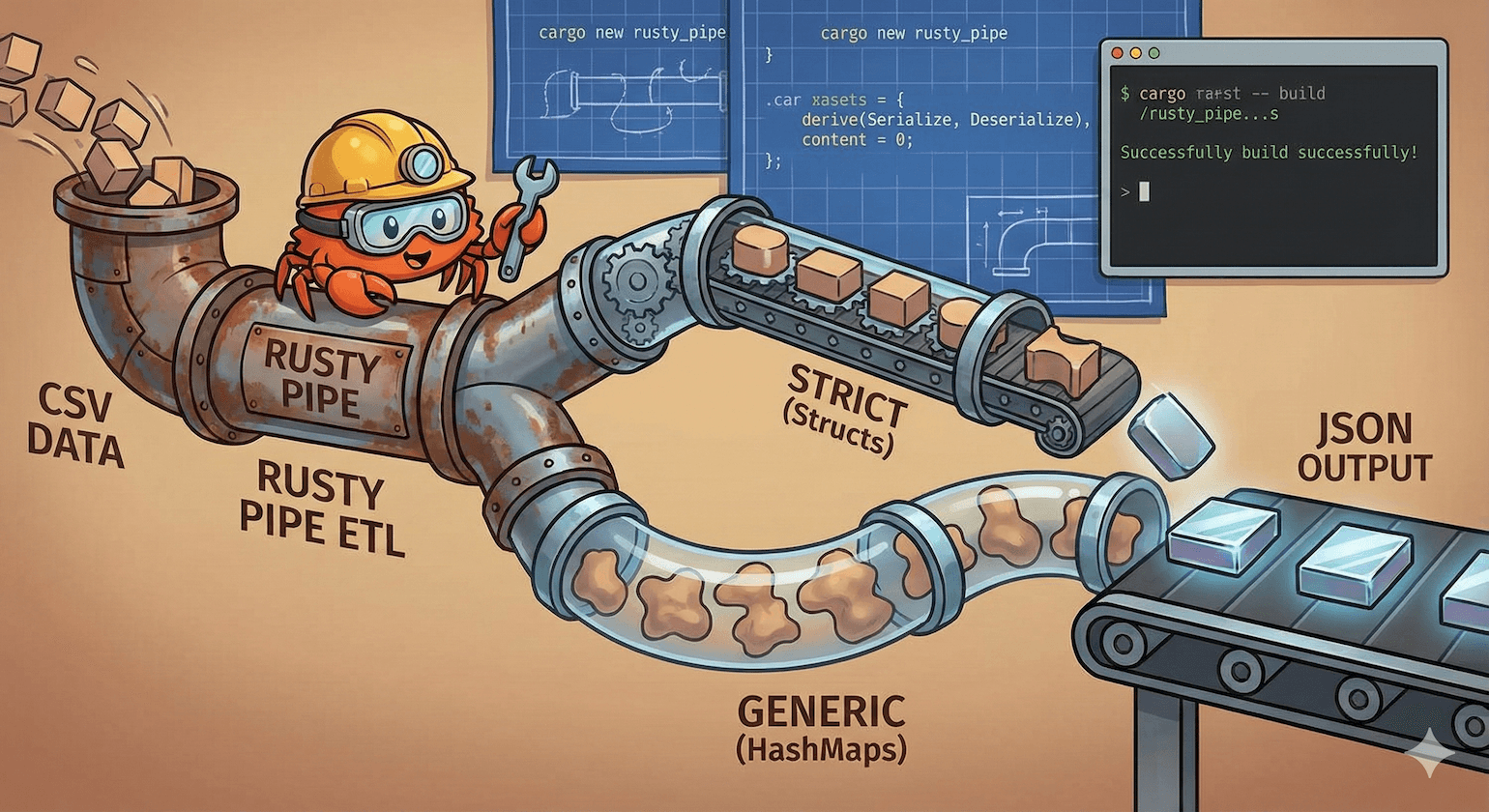

In this post, I’ll walk you through building "Rusty Pipe", a command-line tool. We will start by building a strict, type-safe version, and then refactor it into a generic tool that can handle any CSV file.

Step 1: The Setup

First, we use Cargo (Rust's package manager) to create the project.

cargo new rusty_pipe

cd rusty_pipe

We need a few "crates" (libraries). Add these to your Cargo.toml:

[dependencies]

clap = { version = "4.5", features = ["derive"] } # CLI Arguments

serde = { version = "1.0", features = ["derive"] } # Serialization

serde_json = "1.0"

csv = "1.3"

Step 2: Create Dummy Data

Before we code, let's create the data we want to process. Create a file named products.csv in your project root:

id,name,category,price

1,Apple,Fruit,1.20

2,Laptop,Electronics,999.99

3,Banana,Fruit,0.50

4,TV,Electronics,500.00

Step 3: Version 1 - The "Strict" Approach (Type Safety)

In Python/Pandas, types are often inferred. In Rust, we usually define the shape of our data upfront using a Struct. This acts as a contract—if the CSV has bad data (like text in a price column), the program warns us immediately.

Here is the code for src/main.rs. It reads the CSV, filters out cheap items (price < $1.00), and writes to JSON.

use clap::Parser;

use serde::{Deserialize, Serialize};

use std::error::Error;

use std::fs;

// 1. Define CLI Arguments

#[derive(Parser)]

struct Cli {

input: String,

output: String,

}

// 2. Define the Schema (The Contract)

#[derive(Debug, Serialize, Deserialize)]

struct Product {

id: u32,

name: String,

category: String,

price: f64,

}

fn main() -> Result<(), Box<dyn Error>> {

let args = Cli::parse();

let mut rdr = csv::Reader::from_path(args.input)?;

let mut clean_data: Vec<Product> = Vec::new();

// 3. Stream the data (Memory Efficient!)

for result in rdr.deserialize() {

let record: Product = match result {

Ok(rec) => rec,

Err(e) => {

eprintln!("Skipping bad row: {}", e);

continue;

}

};

// 4. Business Logic: Filter cheap products

if record.price > 1.0 {

clean_data.push(record);

}

}

// 5. Write to JSON

let json_output = serde_json::to_string_pretty(&clean_data)?;

fs::write(args.output, json_output)?;

println!("Success! Processed {} records.", clean_data.len());

Ok(())

}

Running Version 1

Run this in your terminal:

cargo run -- products.csv output.json

If you check output.json, you will see it correctly filtered out the "Banana" (which was $0.50):

[

{

"id": 1,

"name": "Apple",

"category": "Fruit",

"price": 1.2

},

{

"id": 2,

"name": "Laptop",

"category": "Electronics",

"price": 999.99

},

{

"id": 4,

"name": "TV",

"category": "Electronics",

"price": 500.0

}

]

Step 4: The Refactor - Making it Generic

The strict version is great for production pipelines where the schema is known. But what if I want to use this tool on any CSV file, regardless of columns?

We can swap the Struct for a HashMap. This is closer to how Python works, dynamic and flexible.

Changes required:

Remove

struct Product.Import

std::collections::HashMap.Change

Vec<Product>toVec<HashMap<String, String>>.

Here is the updated src/main.rs:

use clap::Parser;

use std::error::Error;

use std::fs;

use std::collections::HashMap; // Import HashMap

#[derive(Parser)]

struct Cli {

input: String,

output: String,

}

// NOTE: We removed the Product struct!

fn main() -> Result<(), Box<dyn Error>> {

let args = Cli::parse();

let mut rdr = csv::Reader::from_path(args.input)?;

// Change the Vector to store Maps (Key=String, Value=String)

let mut clean_data: Vec<HashMap<String, String>> = Vec::new();

for result in rdr.deserialize() {

// Rust automatically maps Header->Key, Row->Value

let record: HashMap<String, String> = match result {

Ok(rec) => rec,

Err(e) => {

eprintln!("Skipping bad row: {}", e);

continue;

}

};

// We removed the price filtering logic because we

// don't know if a "price" column exists in a generic file!

clean_data.push(record);

}

let json_output = serde_json::to_string_pretty(&clean_data)?;

fs::write(args.output, json_output)?;

println!("Success! Converted {} records.", clean_data.len());

Ok(())

}

Now, you can run this same tool on products.csv, or users.csv, or logs.csv. It has become a universal converter!

Step 5: The Grand Finale - Install It Globally

Right now, we are running our tool using cargo run. That’s fine for development, but in production, we want a standalone tool that we can run from anywhere—just like grep, jq, or python.

1. Build for Release

Cargo compiles in "debug" mode by default (which is fast to compile but slow to run). Let's build a highly optimized release binary.

cargo build --release

This creates a standalone executable file at ./target/release/rusty_pipe. You can literally email this file to a friend with the same OS, and it will run—they don't need Rust installed!

2. Install to your System Path

To make it available globally in your terminal, copy it to your bin folder.

For Mac/Linux:

sudo cp ./target/release/rusty_pipe /usr/local/bin/

For Windows: You can copy the .exe to any folder that is in your system PATH.

3. Run it like a Pro

Close your terminal, open a new one, navigate to your Desktop (or any folder with a CSV), and run:

rusty_pipe input.csv output.json

Congratulations! You just built and installed your own system-level CLI tool.

Conclusion

We built two versions of an ETL tool:

The Strict Version: Uses

structs. Best for known data, ensures type safety, and allows easy filtering (e.g.,price > 1.0).The Generic Version: Uses

HashMaps. Best for general-purpose utilities where the column names aren't known ahead of time.

This project covers the core concepts of Rust for Data Engineering: Cargo, Structs, Iterators, and Serde.